Plivo co-founder and CEO Venky B. spoke during a recent online summit about voice-enabled experiences. The event was sponsored by speech-to-text platform Deepgram, which provides speech APIs to deliver AI-enhanced transcription.

Deepgram CEO and Co-Founder Scott Stephenson started the event with a keynote in which he pointed out that voice can bring people together during these days when we’re all staying apart. He noted the value of AI to handle tasks and save expensive human time for uses for which humans are best suited. You can check out the recording of the event for his discussion on the relative ease of labeling images, which is a mature technology, and extracting insights from audio.

Some of the difficulties with speech recognition up until now that Stephenson noted included one-size-fits-all speech models that don’t take into account specific use cases, such as a company’s product names or industry acronyms, and may be challenged by things like accents and background noise. Customers can’t improve the accuracy of these off-the-shelf models. AI-assisted technology like Deepgram’s can train models to perform better — and better performance, meaning both speed and accuracy, matters for downstream tasks. And to be useful, actions have to be repeatable, which means they have to be cost-effective.

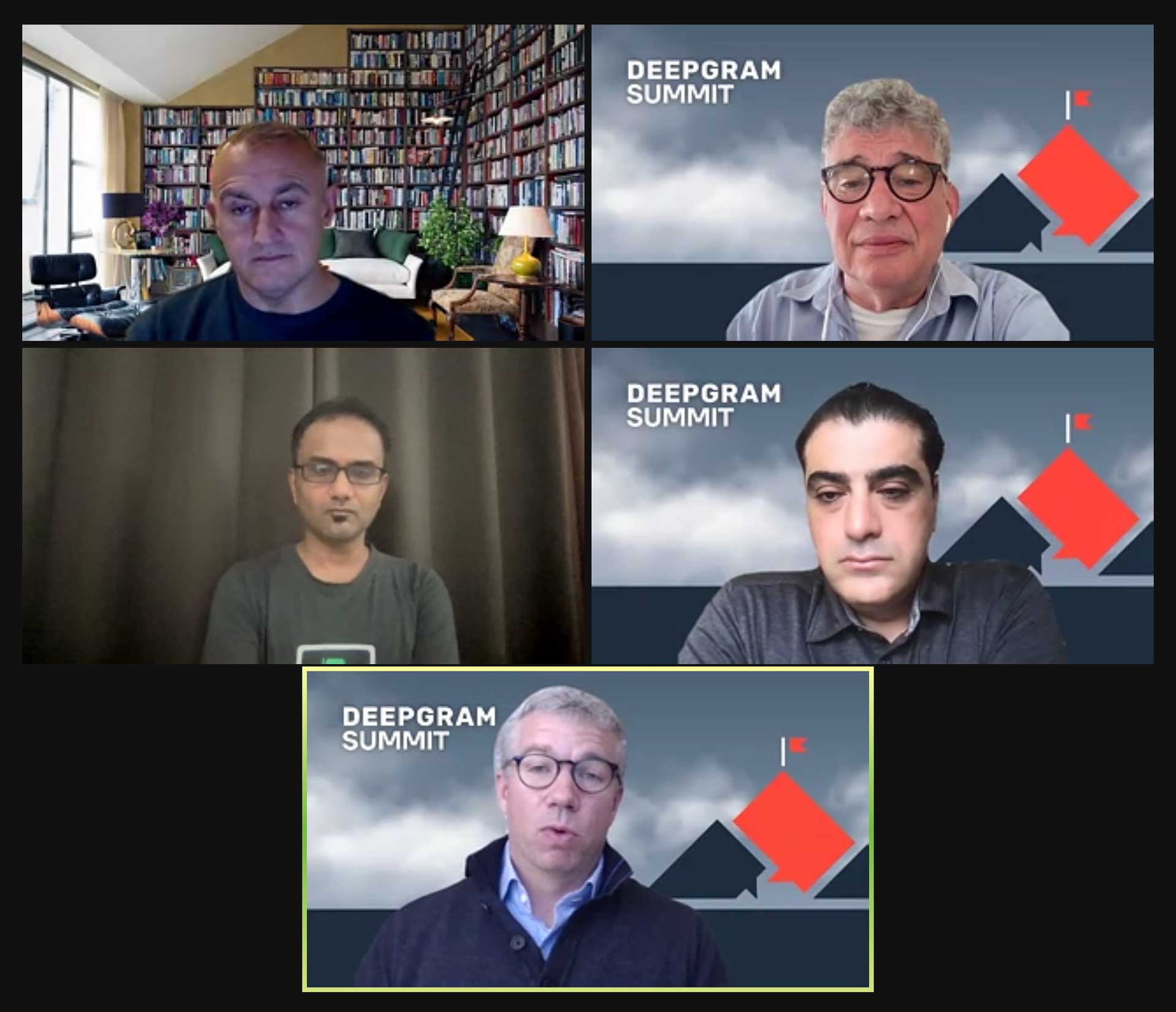

The roundtable Venky participated in was the first of three during the summit, and focused on the potential for voice technology and voice data. Moderator Dan Miller, Lead Analyst and Founder of Opus Research, hosted the panel, which also included

- Pete Ellis — Chief Product Officer, Red Box

- Shadi Baqleh — Chief Operating Officer, Deepgram

- Ted McKenna — SVP, Research and Innovation, Tethr

Venky noted that we’re seeing more adoption of voice for sales and customer use cases. In the post-COVID world there’s been huge acceleration around voice interactions, voice transcription, and voice analytics.

Miller cited an Opus Research survey that confirmed a trend toward real-time transcription use cases. Ellis noted that real-time transcription for sales and agent assistance has exploded in the last four months.

Baqleh said that over the last five or six months, half of Deepgram’s opportunities have involved real-time assistance. He cited as an example the benefit of immediate close-captioning for meetings that involve remote workers, and he pointed out that people are now more willing to be recorded than they were before the pandemic.

Ellis raised contact centers as an example, pointing out that there can be about 50 pieces of metadata per call for contact center agents. Getting a company to adopt voice transcriptions and perform analysis on them is a challenge because people don’t know what they don’t know, he said.

Venky agreed that agent assist is a huge use (which, though it didn’t come up, is part of the reason for Plivo’s new Contacto contact center platform). He pointed out the potential benefit of tagging and categorizing conversations and using support conversation information to inform marketing and sales initiatives. McKenna agreed with the value of “closed-loop actions” post conversations — taking steps to make sure customers are happy. Miller also pointed out the value of detecting in real time whether agents have fallen out of compliance with some mandated compliance practice.

Moderator Miller then wondered whether, given user acceptance of chatbots over the last few years, there were implications for voicebots. Venky said, “We’re seeing hybrid interactions. Internally we call this Jarvis. How do we augment customer assists, even if there’s a human being in the interaction — then how do we take this intelligence back and feed it into the bots?”

McKenna brought up the trend toward automating rote tasks in customer interactions, but asked, “Is it for them or for us? Do customers prefer that? I think that’s an open question.”

Baqleh said, “I think it’s both.” He said if you can involve AI into conversation and workflow you’re better off. You’ll have more efficient and faster ways to have customer responses and solve customer problems. The more you can help customers with their personal workflows, he said, the better off you are.

How to start exploiting voice technology

In the final part of the discussion, all the participants agreed that voice technology today is scalable and reliable, and each suggested a good way to start using it.

Ellis said you start by capturing information and putting it into a format that’s usable. Then you can figure out what you can do with it. You can use it to see how your company is dealing with its customers.

Baqleh said that if companies don’t start doing that now they’re going to fall behind. But, he suggested, “don’t boil the ocean” — pick one use case, win at that, then expand.

McKenna agreed, saying customer disloyalty is the place to start. Businesses should look for ways to improve situations with unhappy customers.

With the final word, Venky suggested picking a use case with a high ROI, so if you execute well it will have the most impact.

To close the session, Miller pointed out that voice technology isn’t just a call center story. It’s useful across the whole organization. Multiple touchpoints can benefit from the ingestion of all conversations and treating that information as valuable input.